This is the first post in a series about onetool. The second post dives deeper into how I built the Lua sandbox.

As part of this year's goals, I decided to invest a lot more time into learning and using AI tools. I'm very skeptical about AI and find it hard to cut through the hype, so I wanted to make sure I'm keeping my bias in check and not missing out on anything really important.

Because of that, during the Carnival I was playing around with LLM tool calling, as any good Brazilian would1, and it got me thinking: wouldn't it be better if we had a way of defining function calls, but also sequences of computations using the results of these calls, in a way that doesn't need a back and forth with the LLM? And it would be great if this interface was close to natural language, so that LLMs would already be trained and do well in writing it?

Turns out we already have that, and we've had it at least since the 1960s. They're called programming languages.

Quick side-note: when I started writing this post, I'd already developed the core functionality for onetool. Then when researching for this post, I found out Cloudflare (and later Anthropic) had already beat me to it, so this might not be as fresh of an approach as I initially thought. I still think this is different enough to be useful, and hopefully by making this opensource it can be used in more applications outside of the proprietary tools of these companies.

Think about it: we want to give the LLM a way of controlling its environment, extend its capabilities, and interact with external systems. What better way of doing so than a programming language? There are a lot of benefits to it, and you can read all about it in this great blog post about how MCPs were the wrong abstraction, but the one that stands out the most in my opinion is how much it can reduce costs by cutting down the number of tokens you have to exchange with the LLM provider.

In the next section, I'll discuss why I chose Rust and Lua, technical decisions, and the next steps. If you are only interested in the tool, it's here: caioaao/onetool. It's a Rust crate that defines a way of spawning a sandboxed Lua REPL for LLMs to interact with. It has adapters for some of the most popular Rust LLM libraries/frameworks: rig, mistral.rs, genai, and AI SDK.

It's still a toy project though, so use with care. Everything may break, and I might decide to change everything tomorrow :D. If you wanna see it in action, here's an example of solving the "Needle in a Haystack" challenge - where the agent has to find a magic number in a huge context (50mb of random strings) - using onetool: examples/needle-in-haystack.rs.

Creating onetool

Ok, let's start with the language choice: why Rust? Let's get this out of the way: it's not because I want the safety guarantees it provides. I loved that it helped me, but it wasn't taken into consideration, and my first exploration was actually in Elixir.

The main reason I chose Rust is because it's a popular language, with great FFI in languages I like (Elixir) or use (Javascript). Having it implemented in such an universally available language will enable me to use it in different contexts.

As a bonus, I always wanted to give Rust a second try. I 'learned' (by learn I mean I used it in a couple personal projects) it years ago, but never really liked it. I gotta say, I had fun. And learning a new language is a lot easier with LLMs now, so that was a fun experience overall.

The next thing to decide was: what language should the AI write in?

These were my criteria:

- Interpreted: we can't depend on a compile-eval loop, and we need the runtime to persist across calls so the LLM can define variables, inspect them, etc.

- Easy to embed: we don't want harnesses having to manage the runtime for this

- Easy to sandbox: it might be a shocker to some, but giving too much power to an LLM can be dangerous

- Simple and expressive: allows AI to write small snippets to solve a problem

- Strong standard library: especially for string manipulation

- Mature, and relatively well known: so we have editor plugins, people are not so intimidated by it, etc

In the end, I settled for Lua. It's "widespread" enough due to it being the config language for neovim, plus it's very common as a scripting language in games (or so I heard). As a nice bonus, it's Brazilian - bora!

An honorable mention goes to LISP. I have to admit I was tempted to use some flavor of lisp or even writing my own interpreter, as it's a very simple language with a great track record on being used as a scripting language. But I feel like people tend to be intimidated/put off by the parenthesis - even though it's a feature of the language, not a limitation :(

Lua was great to work with. It has a very capable standard library, and the Rust crate made it very easy to sandbox it.

Which brings us to the next point: sandboxing.

This is the greatest benefit of a scripting language, over some more direct control (eg: allowing the LLM to write and execute bash scripts). This sandboxing approach provides strong guarantees: the LLM cannot access file I/O, network resources, or OS functions unless explicitly exposed. Barring implementation bugs in the sandbox itself, the attack surface is well-defined and controllable.

Since I wanted this to be safe by default, I made it sort of useless to start (more on next steps later). I blocked file access, OS functions, loading modules... Anything that could be used to run malicious code or break out of the sandbox is blocked.

Ok, now that we have a Lua runtime that can run safe code, how will the LLM interact with it?

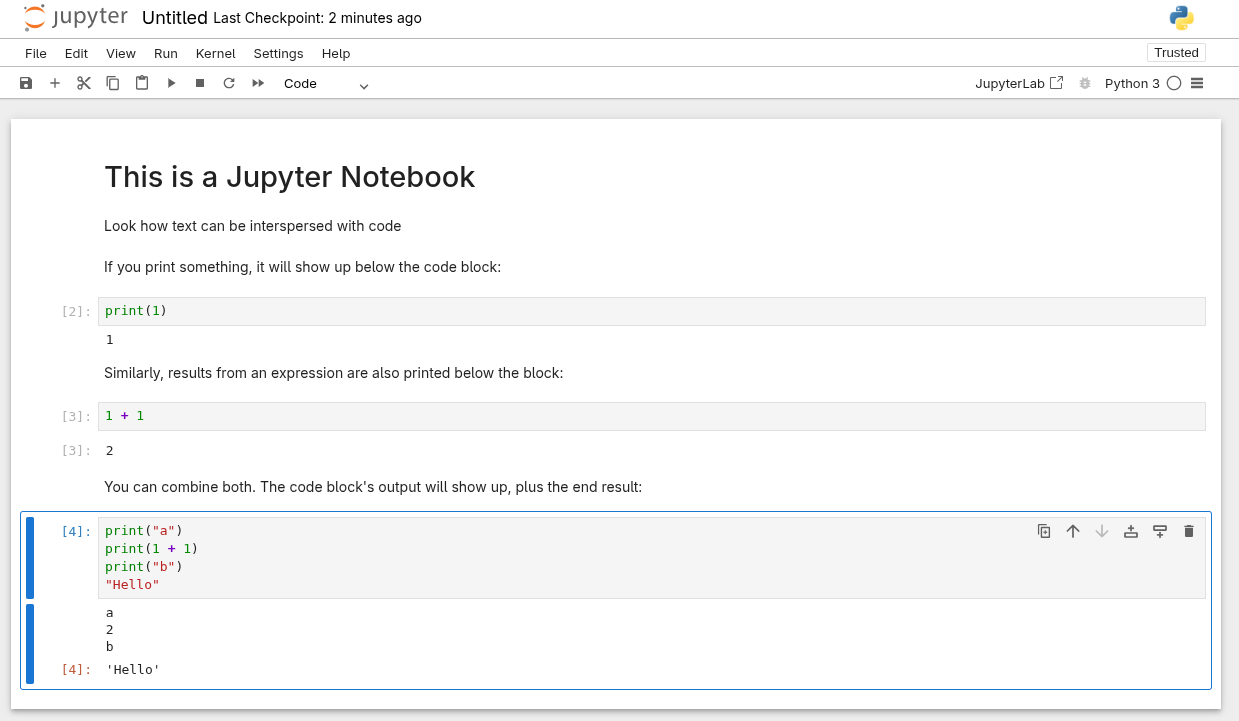

I decided to take some inspiration from literate programming tools, such as Org mode and Jupyter. In these tools, there is text intermingled with code blocks, backed by a runtime that's active during the whole session. When code blocks run, both the output (stuff sent to STDOUT) and the expression result are printed below it.

A chat with an LLM could be seen as this sort of notebook interface, where the code that the LLM writes is there, alongside the output, and the user and LLM messages. I think this maps well with the common mental model of an LLM as a chat interface.

To model this, we take advantage of what's already in the LLM's training data: tool calls. Since LLMs are trained specifically for calling tools, we can leverage that, and expose the code execution as a tool. So a chat with the LLM would look something like this (taken from a real example):

- User: What's the sum of the 10 first prime numbers?

- LLM:

{tool: "lua_repl", source_code: "local primes = {}\nlocal num = 2\n...return sum"} - Tool call:

{output: "", eval_result: "129"} - LLM: "The sum of the first 10 prime numbers is 129."

The last thing to solve is tool discovery: how will the LLM know what functions are available in Lua for it to call? For that I took the simplest approach: a global variable docs that hold description for all the APIs available. Adding a new doc from Rust is simple:

let repl = onetool::Repl::new(...);

repl.with_runtime(|lua| {

register(lua, &LuaDoc {

name: "my_fn".to_string(),

typ: LuaDocTyp::Function,

description: "Does something useful".to_string(),

})?;

});Then we can just instruct the LLM to check the docs variable for info on what is available. Simple, yet effective. The docs are there, and it doesn't bloat the LLM's context

The final step was to build the adapters so the REPL could be used with the most popular Rust AI libraries. For that I just implemented the integration as a tool for genai, and for the others I just asked Claude Code to apply the same pattern. After a brief moment where I thought Claude Code had one-shotted everything, I did a second pass to fix its dumb decisions2. And that's it! Now we have adapters for mistral.rs, rig, AI SDK.

Next steps

Safe access to dangerous APIs

As I said previously, I was very aggressive on limiting what the AI has access to in the Lua runtime. But this makes its application very limited - if we can't write to a file or make a network request, there's little we can do.

You can still expose anything you want through Rust bindings (and since it's in the harness level, there isn't much we could do to secure it anyway), but this makes for poor DX. I don't want to have to go down to Rust to define everything.

One idea I want to experiment with is creating an API for access control in Lua. Something like this:

io = onetool.require("io")

-- If granted, the script can now use `io` tools normally

io.read(...)In this case, onetool.require would verify if the caller has access to this API. It could even implement an access control policy, where it returns the control over to the operator to decide if the API is allowed or not. And we could scope this access to the session, or the call, or even add time limits, where the LLM would have to re-require after a certain period to ensure it still has access to that API.

Another dimension we could add to this access policy is the module, so we have a hierarchy of permissions, instead of only controlling at the agent level:

agent // only basic access, plus permission to call `explore` and `web_search`

|- modules/explore.lua // access to io.read

|- modules/web_search.lua // access to http.requestThis hierarchy means the agent can't make HTTP requests or read files directly - only the submodules it uses can.

This seems like a fun problem to solve, and something that would differentiate onetool from other code execution tools.

Onetool MCP Server

There's an implementation done, but I'd like to improve on that, so it can be used in existing agents, like Claude Code, Cursor, Windsurf, etc. For that I think the bare minimum would be to be able to extend the agent's capabilities only by writing Lua. To make something that could actually replace existing tools, I'll have to solve the permissions system.

Add MCP compatibility

Even if we decide, as an industry, that MCPs were the wrong abstraction, we still have a huge ecosystem of useful things built as MCPs.

So how do we leverage that rich ecosystem?

One idea is to be able to call MCP servers from lua. Remember, MCP is just a JSON-RPC API. So in theory, if we have a json-rpc client in Lua, we can just call them directly, and print/return the result. Something like:

-- https://github.com/brave/brave-search-mcp-server

config = {

command = "npx",

args = {"-y", "@brave/brave-search-mcp-server", "--transport", "http"},

env = { BRAVE_API_KEY = "..." }

}

-- Uses the config, then communicates with the MCP to get the tools and build a table of methods

brave_search_mcp = onetool.build_mcp_client(config)

-- We could have the tool schema saved in a special key so the LLM can access it

print(brave_search_mcp.__tools)

brave_search_mcp.brave_web_search({query = "How to add MCP to Deepseek?"}) This would mean LLMs could call MCPs from code, which would combine the power of code execution with the availability of functionality of MCPs. The best of both worlds.

Another cool benefit would be that this way we could add MCP support to any LLM, just by adding one tool: onetool.

Evaluation pipeline

Remember when I said this started with me playing with LLMs? Well, one of the reasons I started this was that I was interested in playing around with evaluating LLM applications. I want to build something in that sense, to compare token usage, accuracy scores, etc.

It's easy to see the value in things like the needle in a haystack example, where some LLMs won't even be able to solve by default due to the context window, but for more interactive or less obvious examples it would be good to have some metrics.

Recursive LLMs

From time to time, there's something new that's taking over the LLM landscape, and RLMs are a new thing. It should be trivial (but fun) to build it using onetool, and I want to see if I can show that code execution is a generalization of RLMs.

Conclusion

This has been fun to build, and I want to see how far I can take this. I also want to see how it fares in the real world: it was very interesting to see how bad my initial prompts were and how much effect it has on the usefulness of the tool!

All in all, the more I play with it, the more I see this as a useful tool.

Not my first choice, I'll admit. I did manage to squeeze in at least one bloquinho in the following weekend, so at least they won't cancel my CPF

Ok, not totally Claude's fault. I had to change some stuff to make sure the LuaRepl was Sync+Send